Your AI was sharper three months ago. You are not imagining it.

Most businesses treat AI like a software update. Hire a tool in January, expect it to be smarter in April. When the output starts feeling flat, they blame the model.

The model is not the problem. The foundation is.

The Plateau Happens on Day One

Here is what actually happens when you implement an AI system.

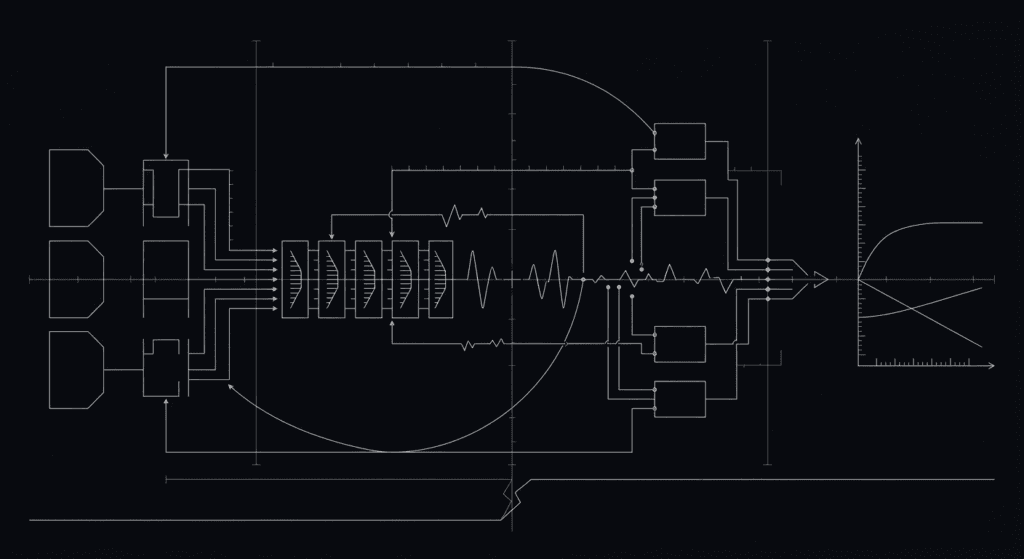

You set it up with a prompt. Maybe it includes your company values, your target customer, your product positioning. You feed it some examples. The output feels sharp because the context is fresh. The AI is working from the version of your business that exists in that prompt.

Then you go back to work.

Your positioning shifts. You refine your pitch based on Q1 results. Your sales team figures out which objections matter most and adjusts their approach. A new competitor launches. Your pricing strategy evolves. Your brand voice tightens.

The AI knows none of this.

Three months later, the AI is generating content from the business context of January. It does not know you dropped three product features. It does not know your target customer tightened from “SMBs” to “SMBs with under 100 employees.” It does not know your main competitor just cut their price by 30 percent. It does not know your most successful sales rep opens with a different hook than the one in the training data.

The model is probably better in April than it was in January. The foundation underneath it is three months stale. There is no mechanism pulling your evolving business reality back into what the AI knows.

What Static Implementation Looks Like

A B2B SaaS company implemented ChatGPT to help write sales emails. They spent a Friday building a prompt with their brand voice, their core value props, and their ideal customer profile. Good system. Good prompts. Immediately useful output.

Six weeks later, the emails felt generic.

Not generic in the way bad writing is generic. Generic in a specific way: the emails were not reflecting what the company had learned about its actual sales process. The reps had discovered that cold email subject lines needed to mention a specific competitor by name to get opens. The AI did not know this. The company had repositioned from “compliance automation” to “audit risk reduction,” a meaningful shift in how they talk to the market. The AI was still using the old framing.

The company’s response was typical. They assumed the tool had gotten worse. They evaluated a more expensive model. They considered hiring a consulting service.

What they actually needed was a fifteen-minute meeting once a week to update the prompt with what they had learned.

They did not do it. Most companies do not.

The Feedback Loop Is Missing

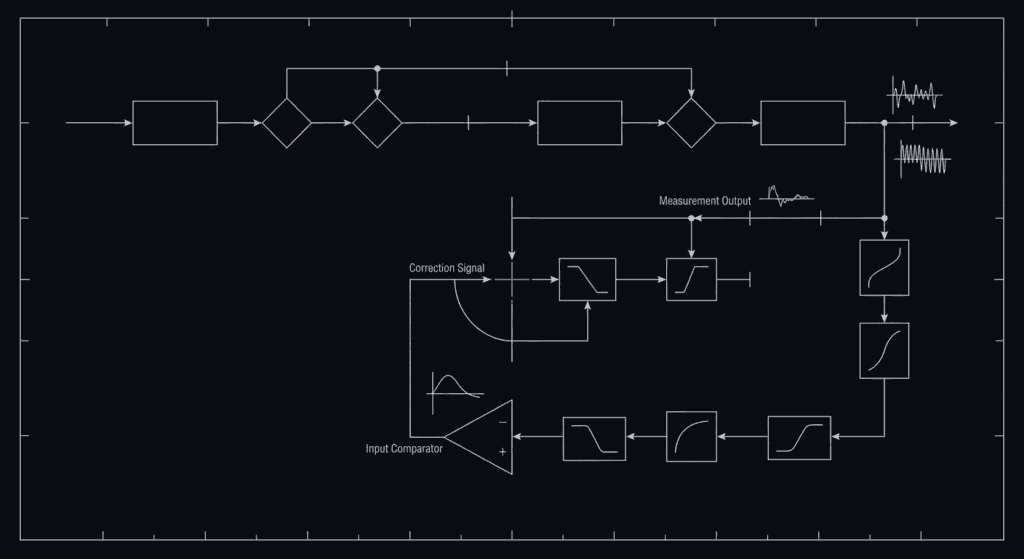

Here is what the mechanism should look like.

Your business changes. Your sales team learns something new about the market. Your positioning sharpens. Your customer research reveals a pattern. Your competitor does something that matters. Within days, that information should flow back into the AI’s context layer. The prompt updates. The system retrains on new examples. The output shifts to reflect current reality, not January reality.

This does not happen automatically. It does not happen because you installed a tool. It requires a decision and a structure.

Someone needs to own the feedback loop and maintain the context layer: keep it current, check whether the examples still represent the business, test whether the output still matches reality.

In the companies where AI output improves over time, this loop exists. Maybe it is informal. Maybe it is a weekly check-in where someone pulls the AI’s recent outputs and compares them to what the business actually needs. In some companies it is a quarterly review where the person closest to the AI sits down and asks: “What have we learned in the last ninety days that the AI should know?”

In the companies where AI output decays, there is no loop. The AI was good on day one because the prompt was built with fresh expertise and attention. After that, it is static. The foundation rots.

Why This Matters More Than You Think

The gap between a good AI implementation and a stale one does not feel like the difference between a 9 and a 6. It feels like the difference between a tool you use and a tool you stopped trusting.

Your reps stop using the generated emails because they do not match what actually works. Your content team stops brainstorming with the AI because it keeps suggesting angles you explored two months ago. Your support team stops using it for responses because it misses the nuance of what your current customers care about.

Once you stop trusting it, you stop using it. Once you stop using it, the tool becomes expensive dead weight.

Here is the actual cost. You are not just wasting money on the subscription. You are wasting the leverage you had on day one. You solved a real problem with real AI. You got back hours and energy. Then you let the solution rot because nobody maintained the foundation.

What Maintenance Looks Like

You do not need a large operation. You need ownership and rhythm.

Pick the person on your team closest to the work. If the AI generates sales content, that is your head of sales or your most successful rep. If the AI generates customer support responses, that is your support lead. That person needs a monthly review of what the AI is actually producing and whether it matches reality.

They need permission to update the context and log what they learn. And they need to track whether the feedback moved the needle. Did changing the prompt make the output better? Are people using it more? Did the problem change?

This is an organizational problem, not a technical one. You need someone who understands the business outcome, has permission to adjust the system, and does it on a predictable schedule.

The Positioning Trap

Here is where this gets tricky.

The company that sells you the AI tool has no incentive to tell you this.

If the narrative is “better models equal better results,” their job is to sell you the new model. If the narrative is “you have to maintain your AI by building feedback loops back into the context layer,” their job is to make sure you have organizational capacity for maintenance work.

The first narrative is simpler to sell. The second is actually true.

The Choice in Front of You

If you have been using AI for six months and the output feels stale, you have a choice.

You can assume the tool is broken and switch to something new. You will get a fresh bounce for thirty days, then hit the same plateau. The foundation problem follows you because you never addressed it.

Or you can decide the tool is not broken. Your implementation is static. And you can build a feedback loop that keeps the AI’s context layer current with your actual business.

The tools are getting better every month. Your business is changing faster than that. If you want your AI to track the real world instead of the world from January, you have to pull the actual world into the system.

The upgrade does not come from the model. It comes from you.

Suggested Meta Description: Your AI was sharper three months ago. The problem is not the model. The problem is a static foundation that stopped evolving while your business kept moving.